Yifei Wang

Hi, I'm Yifei Wang

Second-year PhD student · Rice University

I work on generative models — diffusion, flow matching, and the building blocks that make them more efficient and more controllable.

News

- new New blog post: EMA: A Quiet Hyperparameter That Moves Diffusion Leaderboards.

- new Joining Alan Yuille's lab at JHU as a visiting student for the summer.

- new Released DSR (with Apple).

- Uni-Instruct (with Xiaohongshu Inc.) accepted to NeurIPS 2025.

- Started PhD at Rice University, working with Chen Wei.

- EM-Diffusion accepted to NeurIPS 2024.

Selected work

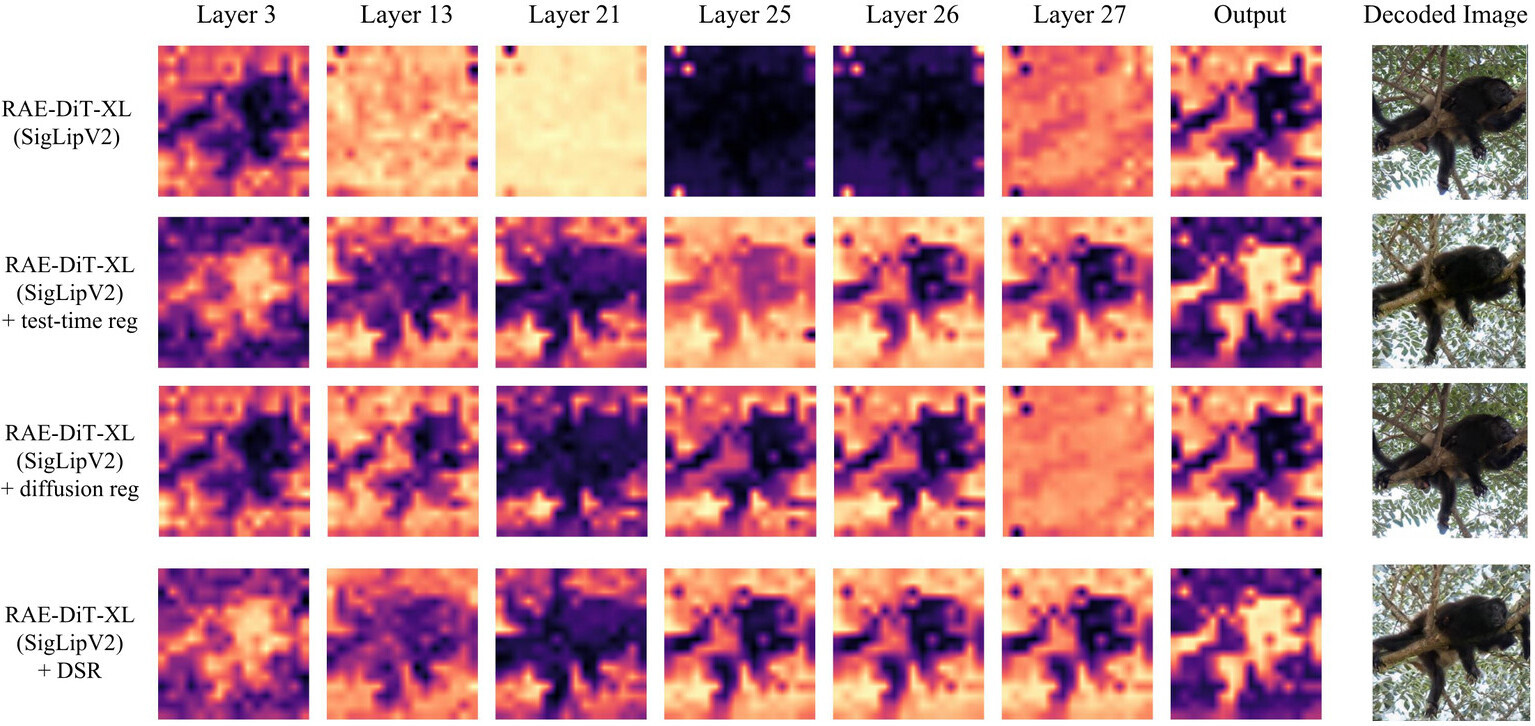

Taming Outlier Tokens in Diffusion Transformers

Preprint · 2026

Outlier patch tokens hurt both ViT encoders and diffusion transformers in RAE-DiT pipelines. Our Dual-Stage Registers patch both sides and improve ImageNet-256 FID from 5.89 → 4.58 at 80 epochs.

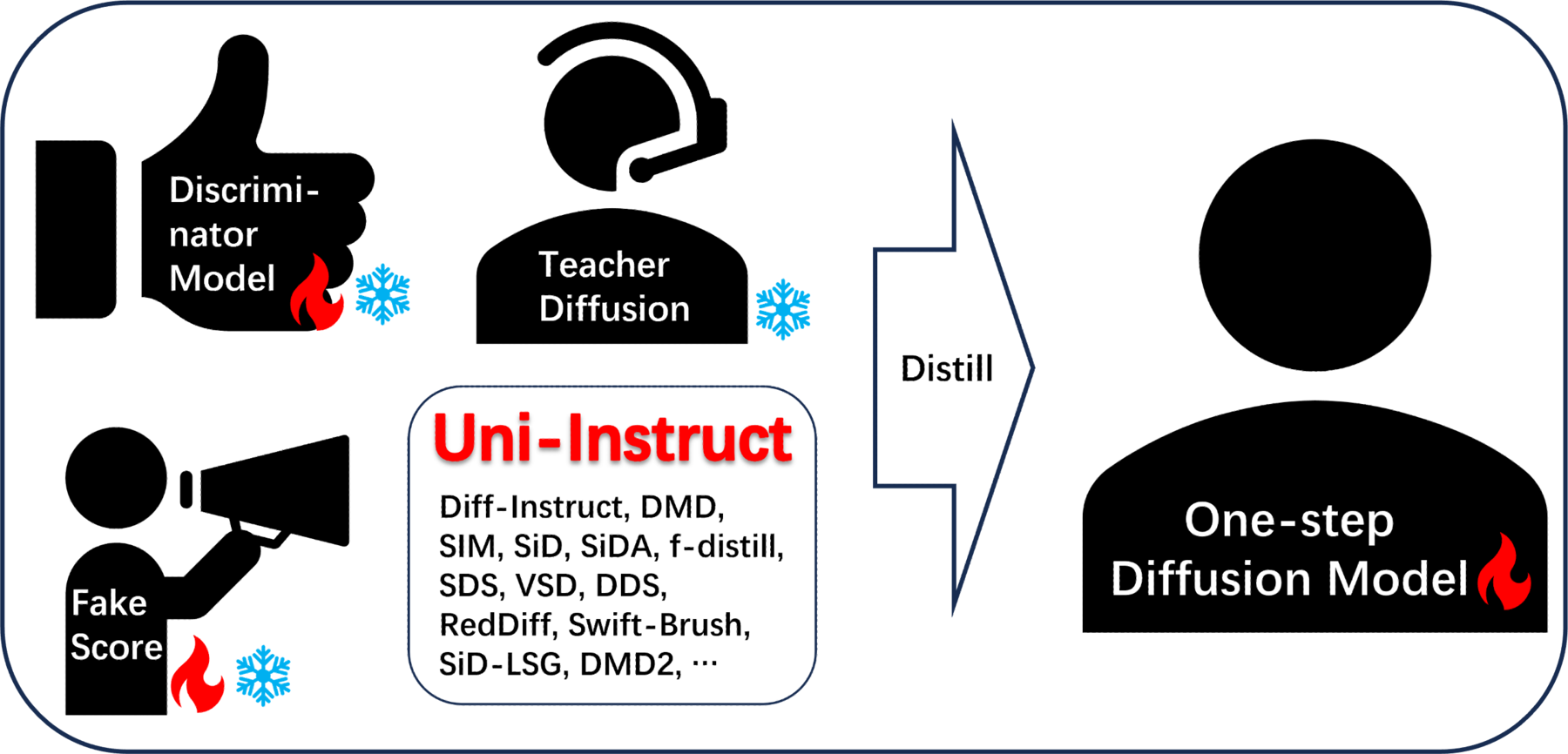

Uni-Instruct: One-step Diffusion through Unified Divergence Instruction

NeurIPS · 2025

A single f-divergence framework that subsumes 10+ one-step diffusion distillation methods (Diff-Instruct, DMD, SiD, SIM, …) — and a new SoTA one-step FID of 1.02 on ImageNet 64×64, beating the 35-NFE EDM teacher.

About

I'm a second-year PhD student at Rice University, where I am working with Chen Wei. In May 2026 I'll be visiting Alan Yuille's lab at Johns Hopkins University. Before Rice I received my B.S. from Peking University, advised by He Sun and Weijian Luo. My research focuses on generative modeling — primarily diffusion models — with an emphasis on the theory and the practical bottlenecks that govern their training.

Outside the lab, I run and hike a lot. I've finished several half marathons, and once spent a summer doing ecological field research in Saihanba and Xihaigu. I also write Chinese-language blogs on Zhihu.